Spec-Driven Agents

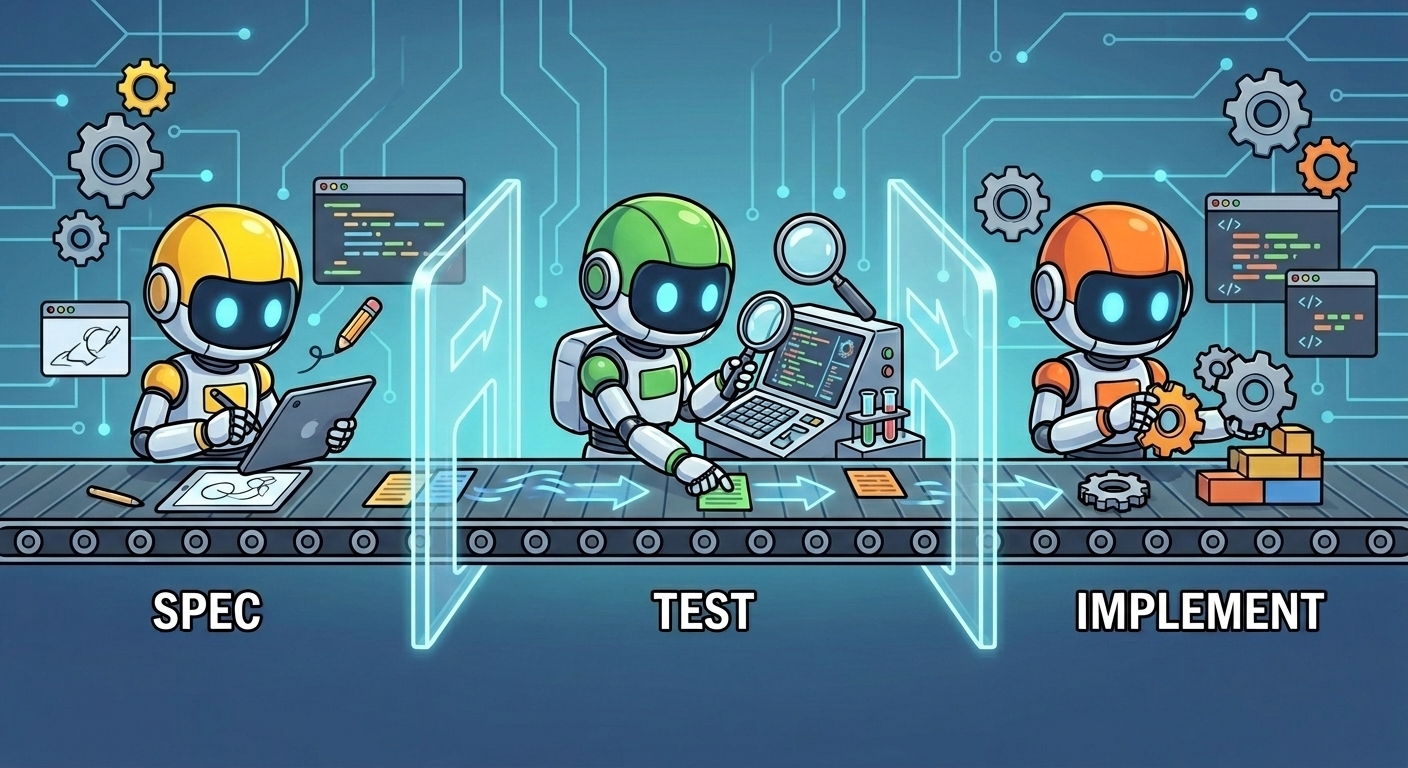

A three-lane workflow for AI-assisted development: spec, test, and implement each in its own isolated subagent

The Problem with AI-Assisted Development

When you use an AI coding agent in a single chat session to go from idea to implementation, things tend to blur. The agent holds the spec, the tests, and the code all in one context and when it decides to "adjust the spec" to make tests pass, or skips writing real tests because the implementation is already done, you've lost the discipline that makes software trustworthy.

The usual answer is to impose rules: "don't change the spec", "write tests first". But rules in a single chat are easy to accidentally violate, hard to enforce, and easy to forget across sessions.

Three Lanes, Three Agents

The spec-driven-agents project is my answer to this: split the workflow into three isolated subagent lanes that communicate only via committed files. No shared chat history, no leaking context across lanes.

Each agent has a clear job:

@specinterviews you and produces a structured spec atspecs/<slug>/spec.md. It asks about acceptance criteria, scope, and edge cases and commits the result.@testreads only the spec and produces complete, executable Gherkin scenarios with real step definitions. Not stubs. The suite must be red because production code is missing, not because the steps throw "not implemented".@implreads spec and features, verifies the suite is red for the right reasons, then drives it green one scenario at a time using classic TDD red, green, refactor.

Lane Discipline

The key insight is that each agent communicates only through committed files. The @spec agent writes to specs/. The @test agent writes to features/ and step definitions. The @impl agent writes to src/ and unit tests. None of them touch each other's territory enforced both by OpenCode permission frontmatter and by explicit prompt instructions.

A per-project .spec-agents.toml file holds the authoritative paths:

Shown below is the template, once you run /init-spec-workflow it will try to detect the language and fill the correct paths and commands

# Generated by /init-spec-workflow. Edit freely; agents read this at runtime.

# This file is the per-project source of truth for spec-driven-agent paths and commands.

schema_version = 1

language = "__LANGUAGE__"

[paths]

specs = "__SPECS__"

features = "__FEATURES__"

acceptance_tests = "__ACCEPTANCE_TESTS__"

unit_tests = "__UNIT_TESTS__"

src = "__SRC__"

agentlog = "__AGENTLOG__"

[commands]

test_acceptance = "__CMD_TEST_ACCEPTANCE__"

test_unit = "__CMD_TEST_UNIT__"

build = "__CMD_BUILD__"

[lanes]

# Optional extra glob patterns each agent is allowed to write to,

# beyond the corresponding [paths] entry. Useful for languages where

# tests live next to source (Go, Rust inline tests).

spec_writer_extra_writes = []

test_author_extra_writes = []

If the file is missing, agents stop and tell you to run /init-spec-workflow.

Escalation Instead of Approximation

One discipline the @test agent enforces that is very useful: if an Acceptance Criterion cannot be expressed as a pure-code assertion with the project's current test tooling, it does not approximate. It escalates.

It appends a note to .agentlog/<slug>.md and stops. You then go back to @spec to rewrite or drop the AC, or you widen the project's test dependencies. No silent hand-waving.

This matters because a test that says "this UI button is clicked" but actually just asserts that a function exists is worse than no test at all it gives false confidence.

Getting Started

Note that this currently only works with opencode

The project installs with a single script:

git clone https://github.com/gertjana/spec-driven-agents

cd spec-driven-agents

./install.sh

This symlinks the agents, slash commands, and scaffold script into ~/.config/opencode/. Then run opencode in any project:

and within opencode run

/init-spec-workflow

...

@spec let's spec a new "user-login" feature

# review specs/user-login/spec.md, edit if needed

@test user-login

@impl user-login

Languages with auto-detected paths include Rust, Scala, JS/TS, Python, Go, Elixir, Java, and Kotlin.

What I've Learned

Running some real sessions with this setup has surfaced a few recurring patterns worth noting:

LLM's like to take shortcuts, in one of the first tests I did with this, the @test agent, only wrote test stubs as it 'couldn't write the tests as it did not yet know the implementation'

Before @impl writes any code, it must confirm the acceptance suite is red for production-side reasons. This catches a whole category of bugs where tests pass vacuously, wrong import path, wrong test command, tests not discovered before you've committed to an implementation direction.

When @test escalates, resist the urge to patch the test. Go back to the spec. Either the AC was too implementation-specific, too vague, or it assumed tooling you don't have. Fixing it at the spec level keeps the contract honest.

The handoff from @spec to @test is not automatic, you read the spec first. This is a feature, not a bug. It's your last cheap chance to catch scope creep, missing edge cases, or criteria that sound right but won't survive contact with an actual test.

Conclusion

In my opinion the two most important concepts in working with Agentic AI are context and guardrails and both are covered with this approach

- Context: The project itself and the detailed feature spec provide a clear context

- Guardrails: Implicit isolation between spec, test and implementation.

The Repo

The full project (agents, templates, slash commands and install script) is at github.com/gertjana/spec-driven-agents.